— A Case Study on “Measurement Operation Failed” Errors

1. Introduction

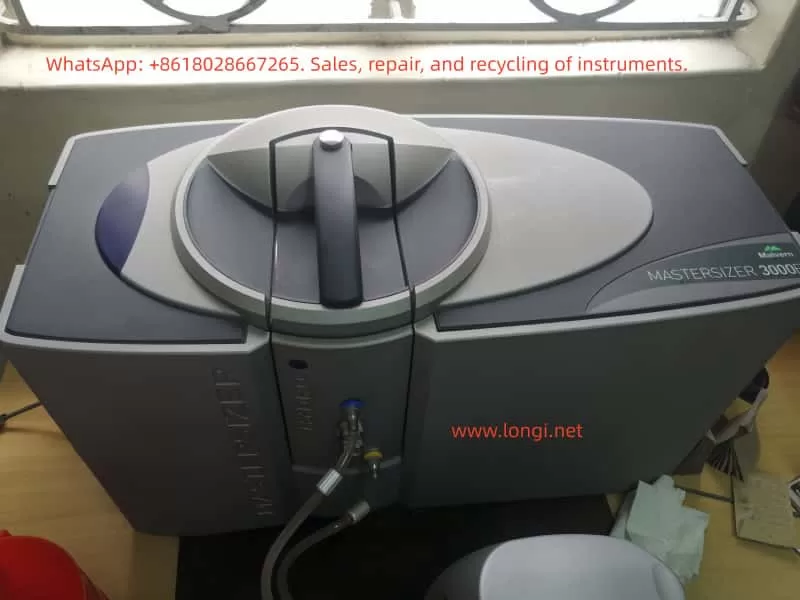

In particle size analysis, the Malvern Mastersizer 3000E is one of the most widely used laser diffraction particle size analyzers in laboratories worldwide. It can rapidly and accurately determine particle size distributions for powders, emulsions, and suspensions. To accommodate different dispersion requirements, the system is usually equipped with either wet or dry dispersion units. Among these, the Hydro EV wet dispersion unit is commonly used due to its flexibility, ease of operation, and automation features.

However, during routine use, operators often encounter issues during initialization, such as the error messages:

- “A problem has occurred during initialisation”

- “Measurement operation has failed”

These errors prevent the system from completing background measurements and optical alignment, effectively stopping any further sample analysis.

This article focuses on these common issues. It provides a technical analysis covering the working principles, system components, error causes, troubleshooting strategies, preventive maintenance, and a detailed case study based on real laboratory scenarios. The aim is to help users systematically identify the root cause of failures and restore the system to full operation.

2. Working Principles of the Mastersizer 3000E and Hydro EV

2.1 Principle of Laser Diffraction Particle Size Analysis

The Mastersizer 3000E uses the laser diffraction method to measure particle sizes. The principle is as follows:

- When a laser beam passes through a medium containing dispersed particles, scattering occurs.

- Small particles scatter light at large angles, while large particles scatter light at small angles.

- An array of detectors measures the intensity distribution of the scattered light.

- Using Mie scattering theory (or the Fraunhofer approximation), the system calculates the particle size distribution.

Thus, accurate measurement depends on three critical factors:

- Stable laser output

- Well-dispersed particles in the sample without bubbles

- Proper detection of scattered light by the detector array

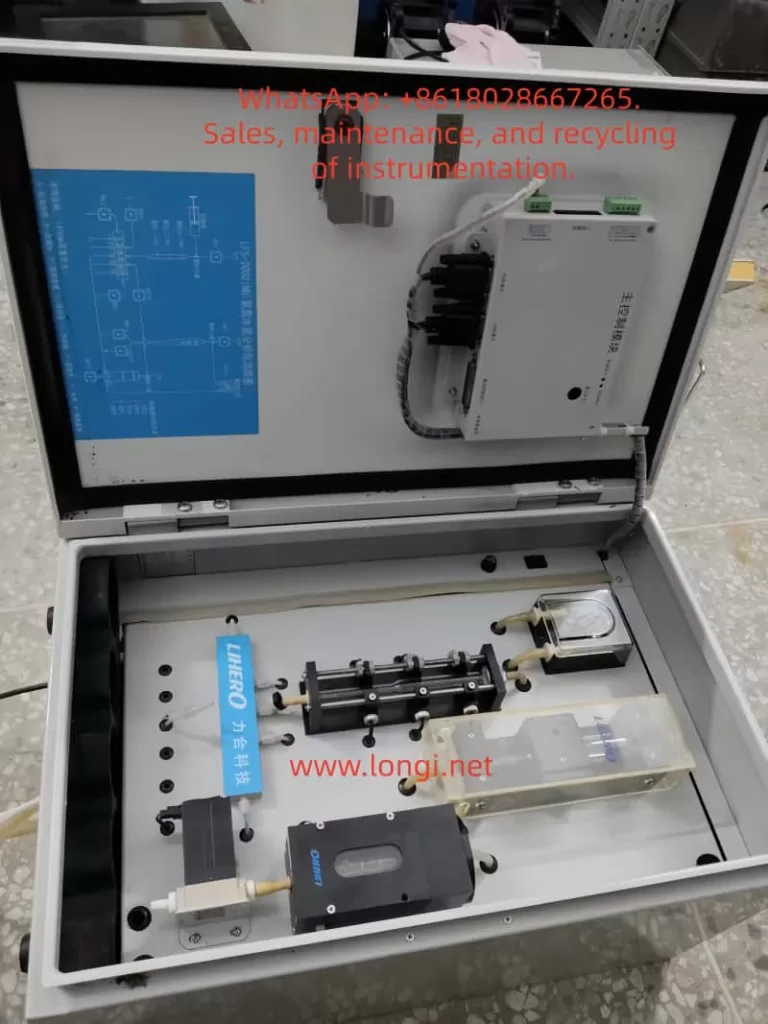

2.2 Role of the Hydro EV Wet Dispersion Unit

The Hydro EV serves as the wet dispersion accessory of the Mastersizer 3000E. Its main functions include:

- Sample dispersion – Stirring and circulating liquid to ensure that particles are evenly suspended.

- Liquid level and flow control – Equipped with sensors and pumps to maintain stable liquid conditions in the sample cell.

- Bubble elimination – Reduces interference from air bubbles in the optical path.

- Automated cleaning – Runs flushing and cleaning cycles to prevent cross-contamination.

The Hydro EV connects to the main system via tubing and fittings, and all operations are controlled through the Mastersizer software.

3. Typical Error Symptoms and System Messages

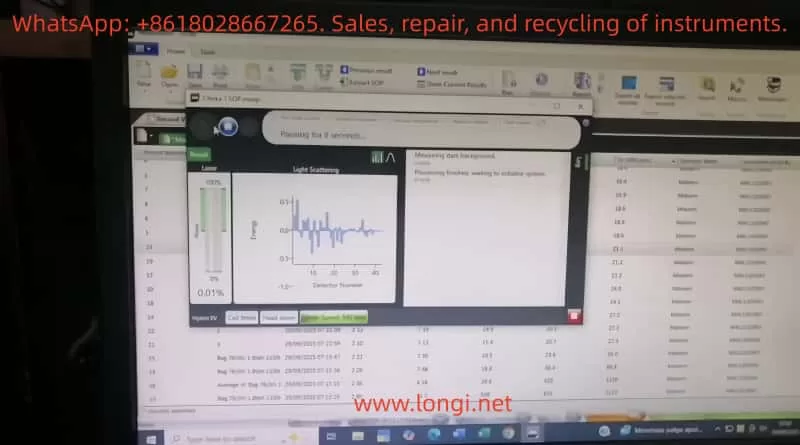

Operators often observe the following system messages:

- “A problem has occurred during initialisation… Press reset to retry”

- Indicates failure during system checks such as background measurement, alignment, or hardware initialization.

- “Measurement operation has failed”

- Means the measurement process was interrupted or aborted due to hardware/software malfunction.

- Stuck at “Measuring dark background / Aligning system”

- Suggests the optical system cannot establish a valid baseline or align properly.

4. Root Causes of Failures

Based on experience and manufacturer documentation, the failures can be classified into the following categories:

4.1 Optical System Issues

- Laser not switched on or degraded laser power output

- Contamination, scratches, or condensation on optical windows

- Optical misalignment preventing light from reaching detectors

4.2 Hydro EV Dispersion System Issues

- Air bubbles in the liquid circuit cause unstable signals

- Liquid level sensors malfunction or misinterpret liquid presence

- Pump or circulation failure

- Stirrer malfunction or abnormal speed

4.3 Sample and User Operation Errors

- Sample concentration too low, producing nearly no scattering

- Sample cell incorrectly installed or not sealed properly

- Large bubbles or contaminants present in the sample liquid

4.4 Software and Communication Errors

- Unstable USB or hardware communication

- Software version mismatch or system crash

- Incorrect initialization parameters (e.g., threshold, dispersion mode)

4.5 Hardware Failures

- Malfunctioning detector array

- Damaged internal electronics or control circuits

- End-of-life laser module requiring replacement

5. Troubleshooting and Resolution Path

To efficiently identify the source of the problem, troubleshooting should follow a layered approach:

5.1 Restart and Reset

- Power down both software and hardware, wait several minutes, then restart.

- Press Reset in the software and attempt initialization again.

5.2 Check Hydro EV Status

- Confirm fluid is circulating properly.

- Ensure liquid level sensors detect the liquid.

- Run the “Clean System” routine to verify pump and stirrer functionality.

5.3 Inspect Optical and Sample Cell Conditions

- Remove and thoroughly clean the cuvette and optical windows.

- Confirm correct installation of the sample cell.

- Run a background measurement with clean water to rule out bubble interference.

5.4 Verify Laser Functionality

- Check whether laser power levels change in software.

- Visually confirm the presence of a laser beam if possible.

- If the laser does not switch on, the module may require service.

5.5 Communication and Software Checks

- Replace USB cables or test alternate USB ports.

- Install the software on another PC and repeat the test.

- Review software logs for detailed error codes.

5.6 Hardware Diagnostics

- Run built-in diagnostic tools to check subsystems.

- If detectors or control circuits fail the diagnostics, service or replacement is required.

6. Preventive Maintenance Practices

To reduce the likelihood of these failures, users should adopt the following practices:

- Routine Hydro EV Cleaning

- Flush tubing and reservoirs with clean water after each measurement.

- Maintain Optical Window Integrity

- Regularly clean using lint-free wipes and suitable solvents.

- Prevent scratches or deposits on optical surfaces.

- Monitor Laser Output

- Check laser power readings in software periodically.

- Contact manufacturer if output decreases significantly.

- Avoid Bubble Interference

- Introduce samples slowly.

- Use sonication or degassing techniques if necessary.

- Keep Software and Firmware Updated

- Install recommended updates to avoid compatibility problems.

- Maintain Maintenance Logs

- Document cleaning, servicing, and errors for historical reference.

7. Case Study: “Measurement Operation Failed”

7.1 Scenario Description

- Error messages appeared during initialization:

“Measuring dark background” → “Aligning system” → “Measurement operation has failed.” - Hardware setup: Mastersizer 3000E with Hydro EV connected.

- Likely symptoms: Bubbles or unstable liquid flow in Hydro EV, preventing valid background detection.

7.2 Troubleshooting Actions

- Reset and restart system.

- Check tubing and liquid circulation – purge air bubbles and confirm stable flow.

- Clean sample cell and optical windows – ensure transparent pathways.

- Run background measurement – if failure persists, test laser operation.

- Software and diagnostics – record log files, run diagnostic tools, and escalate to manufacturer if necessary.

7.3 Key Lessons

This case illustrates that background instability and optical interference are the most common causes of initialization errors. By addressing dispersion stability (Hydro EV liquid system) and ensuring optical cleanliness, most problems can be resolved without hardware replacement.

8. Conclusion

The Malvern Mastersizer 3000E with Hydro EV wet dispersion unit is a powerful and versatile solution for particle size analysis. Nevertheless, operational errors and system failures such as “Measurement operation failed” can significantly impact workflow.

Through technical analysis, these failures can generally be attributed to five categories: optical issues, dispersion system problems, sample/operation errors, software/communication faults, and hardware damage.

This article outlined a systematic troubleshooting workflow:

- Restart and reset

- Verify Hydro EV operation

- Inspect optical components and cuvette

- Confirm laser activity

- Check software and communication

- Run hardware diagnostics

Additionally, preventive maintenance strategies—such as cleaning, monitoring laser performance, and preventing bubbles—are critical for long-term system stability.

By applying these structured troubleshooting and maintenance practices, laboratories can minimize downtime, extend the instrument’s lifetime, and ensure reliable particle size measurements.